Fraud detection sounds simple until you try to build it. At first, it feels like a classification problem. Then you realize it is really a system design problem. You are not just deciding whether something is fraud. You are building a pipeline that transforms raw claims data into actionable information for an investigator.

This project started as a way to understand how modern fraud systems are actually designed. Not just the models, but the full flow from data to decision support.

You can explore the live demo here:

https://fraud-detection-dx7or8vbx6cl3y6srk2gnn.streamlit.app/

Why a Hybrid Approach

One of the first decisions I had to make was how to approach detection. Should I rely on rules, machine learning, or LLMs?

The answer turned out to be none of them alone.

Each technique solves a different part of the problem. Rules are fast and explainable, but limited to known patterns. Anomaly detection can surface unusual behaviour, but does not explain itself. LLMs are powerful for generating context, but they should not be trusted as decision engines.

The key design choice was to clearly separate responsibilities. Rules detect known patterns. Anomaly detection highlights unusual claims. The LLM explains why a claim was flagged.

From Raw Data to Fraud Signals

Raw claims data is not very useful on its own. Dates, amounts, and text fields do not, by themselves, indicate whether something is suspicious.

The value comes from transforming that data into meaningful signals.

Instead of using raw dates, the system computes how quickly a claim was filed after policy activation. Instead of looking at individual claims in isolation, it captures behavioural patterns such as how frequently a customer files claims. It also introduces relational signals, like how often the same phone number or repair shop appears across different claims.

This combination of cleaning, transformation, and feature engineering is where most of the system’s intelligence actually comes from.

The Detection Pipeline

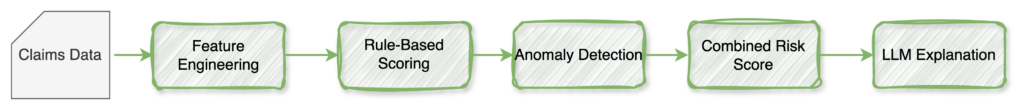

At a high level, the system follows a layered pipeline:

Each stage contributes a different type of signal, and together they create a more complete picture.

Capturing Known Patterns with Rules

The first detection layer is based on deterministic rules. These rules reflect patterns that are commonly associated with fraud.

A claim filed shortly after policy activation is suspicious. So is a repair estimate that is unusually high compared to typical cases. Reuse of the same phone number across multiple claims can indicate coordination. Inconsistencies between the claimant’s story and the adjuster’s observations are another strong signal.

Each of these conditions contributes to a cumulative score. The logic is intentionally simple and transparent because it needs to be explainable to business stakeholders.

Detecting the Unknown with Anomaly Detection

Rules only capture what we already know. To detect new or unexpected patterns, I introduced an anomaly-detection layer based on Isolation Forest.

Instead of checking predefined conditions, the model evaluates how unusual a claim is compared to the rest of the dataset. It looks at features such as timing, cost, and prior behaviour, and identifies claims that stand out.

This approach is particularly useful when labeled fraud data is limited or unavailable, which is often the case in practice.

It is important to be precise here. The model does not say “this is fraud.” It says, “This is unusual.” That distinction matters, especially when these signals are used in decision-making.

Combining Signals into a Risk Score

Neither rules nor anomaly detection are sufficient on their own.

Rules provide clarity but are limited. Anomaly detection provides flexibility but lacks direct interpretability.

To balance both, the system combines them into a single score. More weight is given to rules because they are easier to justify, while anomaly detection adds an additional layer of insight.

This combined score is then used to classify claims into low, medium, or high risk. The goal is not to automate decisions entirely, but to prioritize attention where it is most needed.

Using LLMs for Explanation

One of the most important design decisions in this project was how to use the LLM.

Instead of using it to detect fraud, it is used to support the investigator.

For each flagged claim, the model generates a concise summary, highlights inconsistencies, extracts key signals, and produces an investigation note. This reduces the time required to understand a case and improves consistency in how cases are reviewed.

The LLM does not make decisions. It makes the system easier to use.

That distinction is critical in any system that requires explainability and auditability.

Making It Usable

To make the system tangible, I built a simple interface using Streamlit.

The interface focuses on clarity. It allows users to browse claims, inspect risk scores, and understand why a claim was flagged. It also presents the generated explanations in an easy-to-read, actionable format.

The goal was not to build a full product, but to demonstrate how the different components come together in a usable workflow.

Limitations and Trade-offs

This is a proof-of-concept, and several limitations are worth noting.

The rule thresholds are manually defined, which means they may not generalize well. The anomaly detection model identifies unusual claims but does not confirm fraud. The scoring system is not calibrated as a probability, and there is no feedback loop from investigators.

These are not flaws so much as natural next steps in the system’s evolution.

What I Would Improve Next

If this system were to move closer to production, the next focus would be on learning from real data and improving feedback loops.

This would include introducing a supervised model once labeled data is available, grounding explanations using policy documents through retrieval, and capturing investigator decisions to continuously improve the system.

Another important direction would be graph-based analysis to detect coordinated fraud across entities such as claimants, repair shops, and contact information.

Final Thoughts

This project reinforced a simple idea. Fraud detection is not about choosing a single technique. It is about designing a system that combines different types of signals and supports human decision-making.

The most effective systems balance explainability, flexibility, and usability. They do not try to replace investigators. They help them focus on what matters.

About This Project

This work is part of an ongoing effort to explore how AI can be applied to real-world system design problems.

If you are working on fraud detection, risk systems, or applied AI, I would be happy to exchange ideas.